The Prisoners' Dilemma is Overrated

The most popular game theory model is probably one of the least useful

This isn’t what I started writing about this past week, but it’s been a big week in terms of crap happening, and, well, when you have a stressful few days it is a lot easier to write about something that annoys you a bit than something that has no emotional valence. It is an important point, and not quite so academic or obscure as it might seem.

Recently in conversation someone made the assertion that the prisoners’ dilemma seems “to blow up the idea of self-interest leading to the best outcomes”. That was certainly not the first time I heard someone make that claim, and frankly of people making the claim, he was not one of those who should know better the most. The prisoners’ dilemma (hereafter PD) is possibly the most well known, and certainly the most referenced, of all the game theoretic models. The popularity is almost certainly due to the fact that it is very simple, and more importantly seems to show how individual self-interest can lead to sub standard for all individuals due to defection. Examples from public goods provision to, well, car radio thieves, really anything where cooperation breaks down making everyone worse off can be shoehorned into the PD.

Unfortunately, the PD has a lot of problems. Some of the problems are related to game theory in general, but like most popular things, it has its own super special issues1.

Wait… what’s the Prisoners’ Dilemma, anyway?

Probably three or four of you are asking that, anyway, so let’s take a quick look, if only to make sure we are all on the same page and I am not using some obscure definition or something. We’ll start with the story.

Two young lads are out causing mayhem one night, and steal themselves an expensive car stereo2. Unfortunately for the boys they get picked up later in the night for drunk and disorderly, and whoops, the cops find the stereo in the back of their ride.

Now the boys are not complete idiots and roundly deny everything. The stereo fell off a truck! It was just lying there with all those loose wires attached when they found it! I never stole anything! I was on my way to choir practice!

The cops are not complete idiots either, and decide to separate the boys into their own interview rooms. After letting each one stew for a little, the officer comes in and says to the first kid “Whew, are you in a ton of trouble, son. Your buddy just spilled his guts, and told us everything about how you broke into that car. Now, look, I don’t think you did the whole thing by yourself like he said. You seem like a decent kid, and I’ll just bet it was really his idea, the way he is trying to blame it all on you. Why don’t you tell us your side of the story and set the record straight? Otherwise you are going to take the fall entirely. The DA usually goes easier on folks who are led astray but decide to help the police afterwards.”

Our solo youth, at this point running out of sweat yet strangely needing to urinate desperately, sees that his only hope of lessening his sentence is to rat out his friend and throw himself upon the mercy of the court, and shortly after telling his story signs his confession.

What he doesn’t know is that the officer hasn’t even talked to his friend yet, and is going to feed him the exact same line. By the end of the night both kids are going to have confessed to the crime and will be going to prison3.

So in this situation our boys face two problems: simultaneous turns and a dominant strategy to defect. Let’s break those down.

Simultaneous turns simply means that each player doesn’t know what the other is going to do before they make their own decision. Each player has to choose what they think is their best move not knowing what the other plans, only guessing what they might do. That is why the prisoners in our story are separated: the boys are told that the other player has already made their move, that they have sold them out or “defected”, but they don’t really know it.

Compare this to sequential turns, where the players can see what the other did before they make a decision, in this case what happens if the boys are interrogated together in the same room. Dave says “We didn’t steal anything,” and Steve can see that Dave said that and concur “Yes, that’s right, we didn’t steal anything!” When Steve and Dave can’t know what the other actually did, each is left guessing, and thinking “What if he did sell me out? What’s my best move then?”

That takes us to the next point, that each player’s dominant strategy is to defect. What’s that mean? Well, it means that no matter what the other player does, the best move for the other is to defect. So no matter what Dave decides to do, Steve’s best outcome is achieved by defecting, and vice versa. To see that a little easier, let’s make a quick diagram.

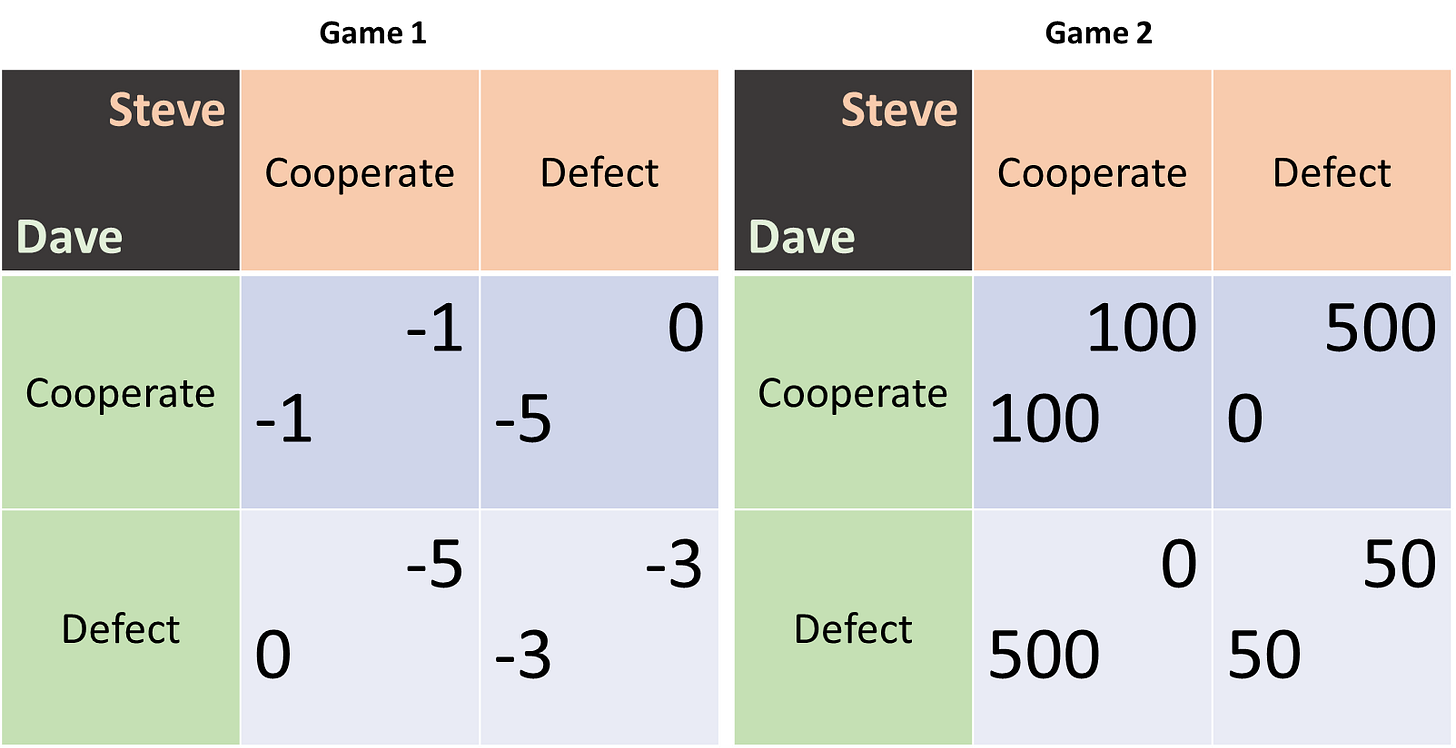

So what we have here is a 2x2 diagram of our situation. We have two players, Steve and Dave, who each have two choices, cooperate and defect. The grid shows the value payoffs for each player based on their choices in some abstract utility (or disutility in this case) value. For instance, if both players cooperate and claim to not have stolen anything (top left cell) they merely get the slightly negative penalty of drunk and disorderly. If Steve rats out Dave while Dave claims they didn’t steal anything (top right cell), Steve gets let go while Dave faces the full sentence. If both rat on each other, both get the rap for stealing, with some consideration from the DA or whatever (bottom right).

So, here’s where dominant strategy comes in. Imagine you are Steve trying to figure out what you should do, not knowing what Dave will do. You can consider what you should do in each case, cooperate or defect.

If Dave decided to cooperate (the cop is lying to you) and you cooperate you have a slight negative outcome, but if you defect you actually break even and get out while Dave takes the rap. Your best outcome (because you don’t care about Dave so much) is to defect.

So what if Dave defects and rats you out? Well, if you cooperate, you take the whole rap, and that’s pretty bad. Like, -5 bad, whatever that means. What if you defect? Well, it still isn’t great at -3, but -3 is still less bad than -5. So Steve’s best option in the case Dave defects is to defect as well.

No matter what Dave does, Steve gets a better outcome from defecting instead of cooperating. That’s what a dominant strategy is: a strategy which is the best thing to do for the player regardless of what the other player chooses. Steve maximizes his outcomes by always defecting, even if he knows Dave will cooperate.

Dave of course has the exact same incentive structure and thus the same dominant strategy. The result of the prisoners’ dilemma then is always the same: both players defect, wind up in the bottom right quadrant, and each gets -3.

This is notable, because it is strictly worse for each player than if they had avoided the dominant strategy and both picked cooperate! Each individual is worse off than they would have been otherwise, despite each taking the option that guarantees their best outcome no matter what the other does.

They are also worse off as a group. The sum of their scores if both cooperate is -2. If one cooperates and one defects, the sum is -5. If both defect the sum is -6. The group is worse off because each follows their self interest. The game itself is super negative sum. Anyone finding themselves in this situation are in a lot of trouble.

The prisoners’ dilemma seems pretty open and shut. Any time an individual’s best interest leads in defecting for a small benefit against bigger losses we should expect everything to break down. If you can rat out or screw over your partners for a small benefit, we should expect that to be the norm, particularly when everyone can do it to each other. Why would anyone ever cooperate? Clearly they won’t, and cooperation is impossible.

Fortunately, humans are pretty clever.

Yea… the primary problem with the prisoners’ dilemma is that it pretty much never works out the way the game predicts, even with prisoners. The Italian and Irish mobs learned how to deal with it in the early 20th centuries, and they certainly weren’t the first. Modern gangs figure it out as well, and if criminal psychopaths can figure out how to encourage cooperation it seems like normal people should be able to as well, and that’s generally what we see. Not perfectly, but commonly enough that we are rather shocked cases of e.g. founders of non-profits taking the money and buying real estate.

Given that cooperation is the norm, what’s going wrong right here? There’s a lot of candidates.

1: Repeated games help the problem

One option for fixing this issue is the notion of repeated games. The PD as presented models a one off interaction. If these two kids run together all the time, however, they might have a past history of cooperating to get into trouble, and some foreseeable future of doing the same together. This means they can more credibly commit to choosing cooperate in order to minimize the total costs. Effectively the deal is “I promise to always choose cooperate, so long as you do the same, and we can get through all these at least cost.” This effectively changes the value of choosing cooperate or defect in any particular play through of the game, as choosing defect in one game is going to lower your outcomes for future games, a bit like how eating all your rations today is less appealing if you know you will be hungry for the rest of the week as a result.

Players maximizing for total game outcomes instead of just the current game does have shortcomings, however. If they players have an idea of how many games are left, things can unravel. Fire up the imagination…

Suppose Steve and Dave have been working their wicked ways for years, through all the ups and downs of heists you would expect in a long running movie series. Now they are getting old and it is time for One Last Job, then they go their separate ways, retiring somewhere sunny without an extradition treaty.

Now, in any heist movie, there is always the tension about whether or not the criminals will betray each other, especially when it is the One Last Job. Why? Because the magic of the repeated game goes away. Without future jobs hanging in the balance, we are back at standard PD dynamics, and expect each player to try and steal all the loot and run, or turn their buddy in, what have you. Each player’s dominant strategy is back to defect, and poof, bad ends for everyone.

But wait, if the Last Job is going to be a bust as cooperation breaks down, what about the Second to Last Job? Why should one cooperate now to ensure cooperation in the future knowing that you will defect later? One should defect, and make this penultimate job the One Last Job by surprise, and get out while the getting it good. But… wait… why not cut out at the Third to Last Job?

That’s the problem of “unraveling”, where any fixed ending of jobs implies a point where you are better off cutting and running, which makes the rest of the repeated games point moot. Now, this is helped a little if you don’t know for certain that any given job is the last, any given game the final, but it doesn’t help a lot. Even if the games continue with some probability in theory and so are sort of infinite, in reality we know that doesn’t apply. Even the suspicion that your partner may view this as the last game makes unraveling likely. If I think you are retiring I have no more reason to trust you because I can’t hold my future defection over your head.

One possible solution to that problem is reputation. Maybe you are going to retire but your kid wants to get in on the heist business, and no one will work with him if you have a bad reputation. Or I don’t want to defect on you because I want to work with your kid, who acts as an extension of your past and future behavior.

That kind of works, but is outside the model. That leads us to the next issue with using the model to predict reality.

2: Games within games fix the problem

Repeated games gets us towards the idea of nested games, each individual game being part of a bigger game made up of a series of identical games. But what if we mix and match games models, and maybe throw in different people?

Suppose that we model more of Steve and Dave’s relationship. Instead of being random kids thrown together and caught in a crime with no history or future, what if they have been doing this for a while? Suppose they are friends who do this sort of thing a lot, stealing things to sell, splitting the proceeds. Sometimes things go to hell and they get caught, but that’s part of the risk.

In this case, we might imagine that defecting when caught by the police represents a decision to quit the extended game entirely; neither will engage in crime again with someone who rats on them even once. If getting caught is relatively low probability, then cooperating is much easier, because taking a small lump in the case of getting caught is totally worth the good outcomes of crime in normal times.

So here he have a slightly more complicated game. It starts with the decision to commit some crime; presumably both players have to agree to that for it to happen. Conditional on having decided on the heist, there is now some probability that Steve and Dave get caught. If they do get caught, they get the nasty PD situation. If they don’t get caught, they divide up the loot as some positive number. This game is then repeated.

This presents some new solutions to the dominant strategy is to defect issue. We need to remember that in the standard prisoners’ dilemma model, the question was cooperating against the police, but that’s not really what these two criminals were working on up to together. In the general sense, we are less interested in people cooperating when things go to hell, but rather in whether they are going to even start cooperating to do something that benefits them both. If we include the good outcome where the PD doesn’t even come up, cooperation to execute the heist is a lot easier, and the promise of engaging in future heists only if your partner doesn’t defect has a lot more weight. Effectively Steve and Dave can agree that while those -1s for cooperating are worse than a 0, they are more than made up for by splitting up 10s in the future, so each can credibly promise not to defect because they don’t want to lose that future.

In fact, there is even another option here: maybe Steve doesn’t care too much if Dave defects, and vice versa. That seems kind of crazy, but if there is a pretty low chance of getting caught relative to the payout, maybe Steve can defect on Dave and Dave will still want to go on future heists (when he is out of prison, presumably.) Why? Because if, say, there is a 33% chance of getting caught4, and the spoils are divided equally if not caught (5 each), that means the expected value for any given heist is the probability of getting caught times the penalty from playing PD plus the probability of not getting caught times the benefit. In math5:

EV(Dave) = p(caught)*outcome(PD) + p(not caught)*(5) = .33*o(PD) + .66*(5)

EV(Dave) = .33*o(PD) + 3.333

So, what’s Dave’s expected value? Well, that depends on what the outcome of the prisoners’ dilemma is, and we know those: -1, 0, -5, -3. If Dave believes that Steve will rat him out, the outcomes of the PD are either -5 or -3, depending on his action. The probability of getting caught times the outcome of the PD then is either -1 (if Dave defects too) or -1.65 (if Dave cooperates anyway). Add in the probability of not getting caught times the value of the heist, and Dave is looking at an expected value of the heist of 1.68 or 2.33, even if he believes Steve will send him up the river if caught. That is to say, Dave’s expected value of the heist with Steve is still positive! Assuming Dave doesn’t have anything better to do than go on heists with Steve (i.e. nothing to do with an expected value >=1.68) we can expect Dave to still agree to go on heists with Steve even after being defected against and expecting future defections.

Of course, that is the worst case, where Dave can’t hold out on Steve if Steve defects. We can still apply the normal palliative value of repeated games to the situation and use the specter of losing future value to encourage cooperation now. A rule of “defect once and I will never team up with you again” can create a lot of leverage.

Note that the mere probability of getting caught instead of starting with the assumption of having been caught corrects the “One Last Job” problem, too. Unraveling is less likely to happen if there is only the possibility of bad outcomes instead of just balancing off some bad now against less bad in the future. Sure, low enough value on future heists and/or high enough probabilities of getting caught change the numbers, but that’s the point: those matter quite a bit to determining how players behave inside the PD part of the game.

We can even imagine another solution to the PD problem nesting games creates. Imagine Steve and Dave have some mutual acquaintances, maybe brothers or cousins, around where they live. Maybe all these social connections are aware of the deals and heists Steve and Dave get up to. What happens if Steve sells out Dave, and Steve’s friends find out?

I posit the answer is Steve has a very bad time, indeed.

This bad time might range from creatively restructured knees, a free night’s sleeping accommodation at the bottom of the nearest scenic body of water, or perhaps just a loss of reputation that limits Steve’s future options as mentioned above. The existence of outside parties who may have moves in other parts of the nested game dramatically change the nature of the situation. Relying on your partner to choose cooperate when the cops put the screws to you is a lot easier when you both know that your extended family and friends will punish either of you for defecting.

Plus, it doesn’t have to be all stick. Maybe those extended family members will reward cooperation. Not in the “you get too keep your knee caps” kind of way, but by taking care of your family while you are in prison, enhanced reputation, maybe even saving you a bigger cut of future loot, whatever.

External carrots and sticks are both ways of making credible commitments to behave in particular ways under pressure that people can rely on when entering into uncertain cooperative endeavors. Effectively these pre-existing relationships built out as extended games change the outcome values of choosing different moves within the prisoners’ dilemma game, allowing for certain outcomes to be achieved.

In fact, this works so well that it suggests a whole other problem with the prisoners’ dilemma as a model for human behavior…

What are the numbers in that box, anyway?

To be fair, this is a problem across game theory as a whole, not just the prisoners’ dilemma, but the PD highlights it nicely: Where the hell do those outcome score values things come from?

At a high level, we can say “well, it is the negative value of that outcome, in utils. Or kilo-utils, whatever.” Of course that is basically the same as saying “we made up roughly how bad they are relative to each other, only.” So maybe we say they are dollar amounts. Economists love that sort of thing, because at least it lets you compare how much more a criminal hates going to prison for 2 years compared to how much he hates 5 years, or getting a root canal, or how much he likes french fries. Kind of.

Look, can’t we just shut up and do math?

Well, no, not if you want the math to relate to reality at all.

First of all, we already talked about what happens if Steve and Dave’s friends and family can reasonably be expected to take revenge for defection. That means those (0,-5) and (-5,0) outcomes are just way off. This especially gets awkward as you get more detail; what if Dave’s family is super revengy about that sort of thing, while Steve’s isn’t? How do we rewrite Steve’s values if Steve doesn’t know exactly how likely he is to have body parts removed, and just what body parts they are? What about the effects of reputation?

What if Dave and Steve don’t see prison as being equally worrisome?

What if Dave is obsessed with honor and would feel extremely ashamed if he defected, even if it meant going to prison?

How much does Steve value having a partner who is so obsessed with honor?

Yea… basically if you know everything about the two players you can more reliably predict their behavior. Which is great, but at that point you didn’t really need game theory, did you?

You certainly didn’t need the PD because whether or not the game even counts as a PD is based on those numbers in the boxes!

That’s right, we assumed the outcome of the model when we chose the model, and we chose the model when we assigned the values to each outcome.

Let’s look at that a little more.

Things get strange when you change the numbers a little

A few years back I attended a seminar at a conference where a young guy presented a paper called something like “Behavioral Game Theory.6” His point was that the dynamics of a game, the tendencies that drive things like dominant strategies, depend as much on the absolute values of the outcomes then the relevant outcomes when played by actual humans. Let's have an example:

Here we have two Games, 1 and 27. The first is the PD we have been talking about, and the second is… well a little different. The outcomes are positive, so that’s nice for everyone, for a start. But note that the dynamics are still the same: the dominant strategy is still to defect. Effectively these are two versions of the PD, and game theory predicts that they should have the same outcome.

Yet, here’s the kicker: in actual tests with humans they absolutely do not.

According to the paper’s presenter, if you throw experimental subjects into the normal PD game they behave just about exactly as you would expect, with most people choosing to defect. If you throw them into Game 2, however, nearly everyone chooses cooperate. Same situation, no one can see or communicate with who they are playing against (or even know it is a person and not a computer), yadda yadda, same thing, different results.

I would have been skeptical about this at first, except that I knew this to be true in many other game models. The Ultimatum Game has a similar pattern, with theory suggesting wildly different results than what ordinary humans achieve8.

The behavioral part of the talk was basically saying that while the dynamics of the system are the same, the absolute values matter for various reasons. Part of that might be that humans view losses more negatively than they view gains positively9. Part of it might be a leap of faith: one figures that if both people can be better off by cooperating, one should cooperate, assuming the other player is thinking the same thing.

What is strange to me, as an economist and therefore apparently kind of bad at playing games with humans, is that people wouldn’t just pick defect, and figure that worst case they get $50 and best case $500. If you could communicate, you could even promise to split the $500 if they cooperated…

Anyway, that’s the problem here: not only are the dynamics important, and dependent on the relative values you put into the grid, thus defining the type of game and its outcomes, how the real humans whose behaviors we are trying to model here behave depends on the absolute values of the outcomes, not just the relative values.

That’s pretty well damning of game theory in general as a tool for predicting human behavior, but particularly it means the prisoners’ dilemma should not be taken as a generalized tool because there are many unknown values that go into the model, and as testing against reality shows we are very bad at guessing what those values are.

Whither game theory?

Just to wrap up, I want to mention that game theory isn’t all bad. It is kind of pants for predicting human behaviors using simple games, because identifying the dynamics of the games, the layers of other games it might be nested in, and the values of payoffs of various moves are all over the place and impossible to know. Once you do know enough of them, you hardly need game theory to intuit the outcome.

On the other hand, game theory is pretty handy for actual game design. That is, if you are designing a board game or video game, or a particularly questionable type of online store or auction, you actually can dictate a lot of those outcome values as well as the nested games going on. As a result, game theory can allow you to create some really interesting interactions by changing how players make decisions and in what order. Consider how much more boring poker would be if everyone played with hands showing. You could do it, and it would still be a game, but it would not be as fun. Game theory helps design more interesting interactions for real people in contrived situations.

Just don’t think you can go the other way, and please stop applying the prisoners’ dilemma to everything :)

Thanks for reading! This was long and kept getting interrupted by work and home life stuff, but as I said, as other things annoyed me I was able to redirect towards thinking of this. A follow up essay to the one about emergent ant and human societies is on the workbench as well! Thanks again!

Although presumably none could be described as “daddy issues.”

This is probably dating me… do cars have stereos that matter anymore?

Whew… managed to finish writing that without laughing. We all know they aren’t going to see the inside of a cell unless it was the mayor’s car stereo. Unless it is discovered they weren’t vaccinated…

I wish crime statistics were such that 33% wasn’t a crazy high number… alas.

Ok, maybe less “math” and more “Hammer’s idiosyncratic pseudocode.”

Apologies if I am misremembering the title of the paper. It wasn’t published yet so I didn’t have a copy, and I can’t remember names well to start with.

Again, probably not the exact example or numbers in the presentation I remember from like six years ago. It is possible his version was all negatives, just really big negative instead of small. Mistakes are all mine, credit for the idea are all his.

I point out “ordinary humans” because game theorists and economists are absolute pants at the Ultimatum Game, failing far more frequently.

That is, we hate losing $100 more than we like to gain $100, so if we were presented a gamble of flipping a coin and winning $100 on heads and losing $100 on tails, most people would turn it down. That seems to be true… the ratio for most people (who don’t like to gamble just for fun) is apparently something like 2:1, that is, a loss hurts twice as much as equivalent gain.

Enjoyed this and it led me back down the rabbit hole I first entered by reading The Evolution of Cooperation by Robert Axelrod. Computer-generated strategies for PD-style games often seem to advantage ‘be nice but make it clear that you aren’t going to be anyone’s schmuck’, but even that gets complicated.

Sorry life’s chucking shit on the windshield. Same here at the moment 🤷♂️

Hi Doc Hammer. I am sorry you have been having a bad week. Perhaps this paper about Functional Decision Theory by Eliezer Yudkowsky and Nate Soares will cheer you up. It's a fun read, assuming that you find mathematics (and logic) fun. And it is another way to discover that PD isn't all it's cracked up to be.

https://arxiv.org/pdf/1710.05060.pdf